|

|

|

| Jim Worthey • Lighting & Color Research • jim@jimworthey.com • 301-977-3551 • 11 Rye Court, Gaithersburg, MD 20878-1901, USA |

|

|||||||

|

|

|||||||

|

| Legacy

Understanding |

|

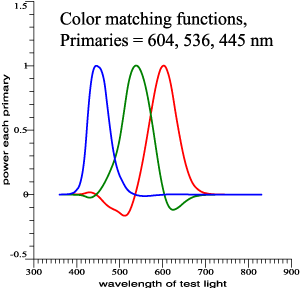

Fictitious but realistic color-matching data. |

|

|

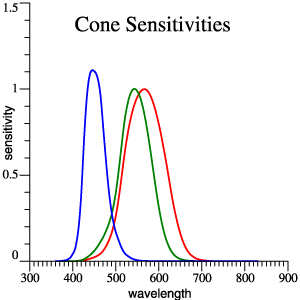

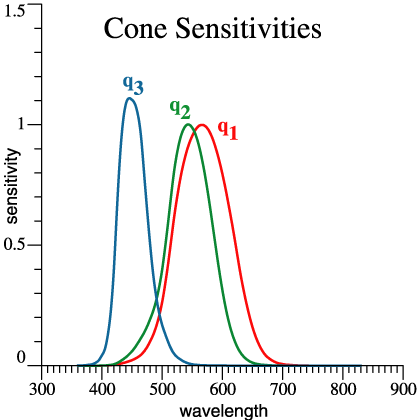

Cones,

red,

green

and blue. |

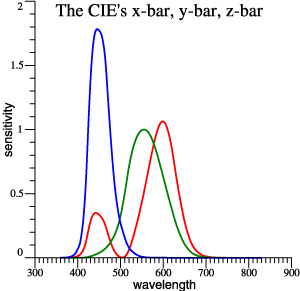

| Traditional

x-bar,

y-bar, z-bar |

|

|

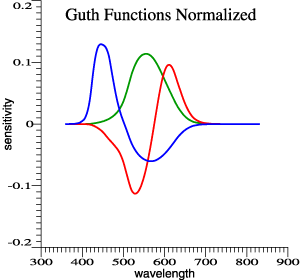

Guth's 1980

model,

normalized. Achromatic function is proportional to

y-bar. |

|

Linear

transformations

of color-matching data predict the same matches.

This is Figure 1. < The only numbered figure! > |

|||

| How

does a set of 3 functions predict color matches? |

|

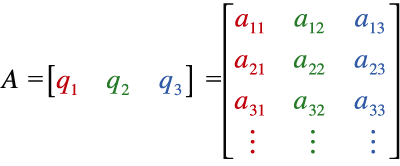

(Eq. 1) |

| A

set of color matching functions, CMFs, can be thought

of as column

vectors, which become the columns of a matrix A. Matrix A can contain

any of the 4 sets of color matching functions above,

for example. |

|

| SPD

as a Column Vector |

| The

spectral

power distribution of any light can be written as a

column vector L1.

It is then summarized by a tristimulus vector V, V = AT L1

.

(2)

Light L1 is a color match for light L2 if AT L1 =

AT L2

.

(3)

Refer again to the Figure 1. If Eq. (3) holds for one set of CMFs A, then it will hold for the other sets. That much is standard teaching. |

|

| Moment

of Reflection |

|

So, everybody

knows

that alternate sets of functions can be equivalent for

color-matching.

But it's remarkable that 2 sets can be

equivalent-for-matching, but

also look very different, and be suited for other

applications that go

beyond matching. For example:

Nominal time

= 1:37 pm, Pacific

Time

|

| Animation

Digression: Simulated Experimental Data |

| What's Missing? |

|

So... we have at least 4

interesting sets of functions that are

equivalent-for-matching, but

have further meanings. What's missing is a good scheme for vectorial addition of colors. In his 1970 Book, Tom Cornsweet emphasized vector addition to account for overlapping receptor sensitivities: |

|

My

method is inspired by Cornsweet, but also by Jozef

Cohen.

|

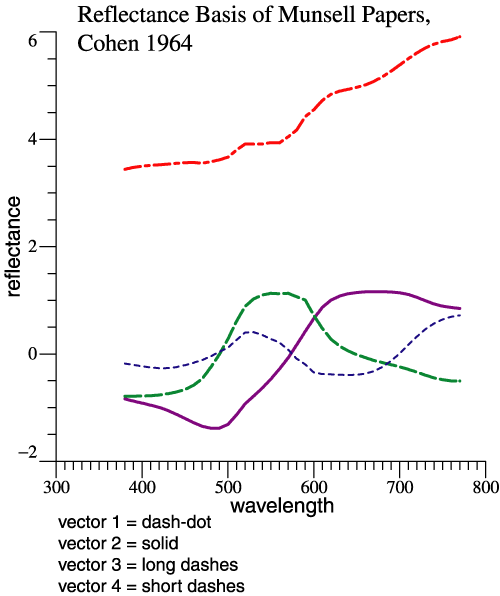

| Jozef Cohen's Innovations. |

Jozef B. Cohen's Book |

|

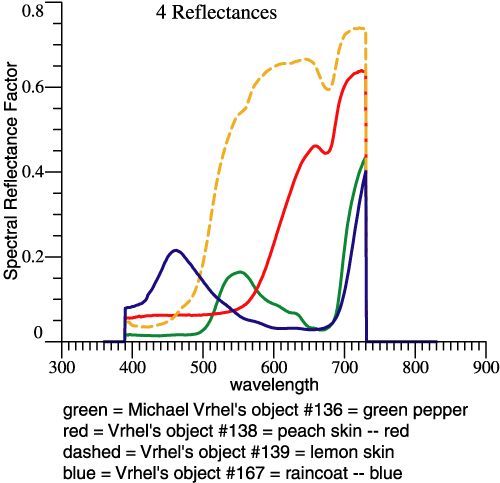

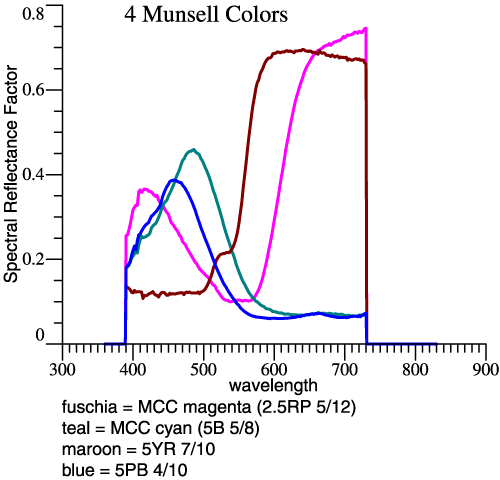

| Smoothness

of

Object Spectral Reflectances |

|

|

|

|

The “Dependency” paper was little

noted for 20 years, but is now widely cited. The idea

is referred to as

a “linear model,” and is the basis for many studies

with a statistical

flavor. Having used some linear algebra ideas in a

curve-fitting task,

Cohen then asked, “Could linear algebra be applied

directly in thinking

about color-matching data?” In the traditional

presentation,

color-matching data are transformed and one version is

as good as

another. Cohen asked a daring question: “Is there an

invariant

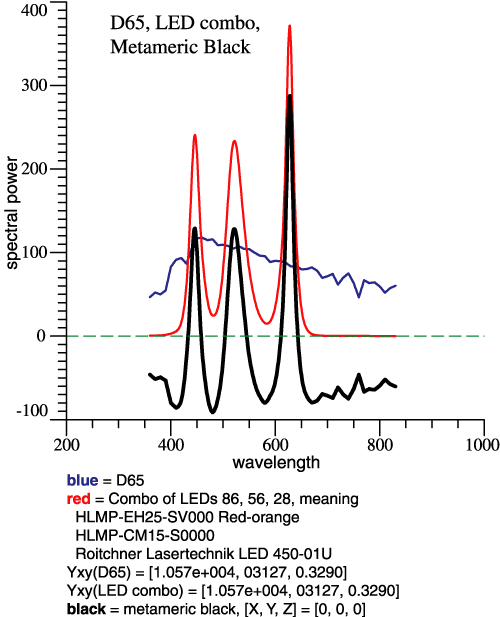

description for a colored light?” We may well ask, “What would it even mean, that a function or vector is invariant?” Cohen started with Wyszecki’s idea of “metameric blacks.” If 2 lights have different SPDs, but are colorimetrically the same, they are metamers. Subtracting one light from the other gives a metameric black, a non-zero function with tristimulus vector = 0. Consider an example of D65 and a mixture of 3 LEDs adjusted to match: |

|

The figure at left shows the

concept of metameric black. A mixture of 3 LEDs

matches D65.

Subtracting D65 from the LED combo gives the black

graph, a function

that crosses zero and is colorimetrically black. The

metameric black is

a component that you cannot see, as noticed by

Wyszecki. Cohen then

said, “Let’s take a light (such as D65) and separate

it into the

component that you definitely can see, and a metameric

black.” (My

wording.) The component that you definitely can see is

the light

projected into the vector space of the color matching

functions. Cohen

called that component... ... ta-dah ...

|

| The Fundamental

Metamer. |

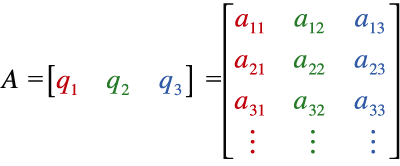

| Cohen needed a formula to compute the fundamental metamer. As a practical matter, the “projection into the space of the CMFs” means a linear combination of CMFs that is a least-squares fit to the initial light. As on p. 3, let A be a matrix whose columns are a set of CMFs, | |

|

. (1) |

| For a light L(λ), we want to

find the 3-vector

of

coefficients, C3×1,

such that L

≈ AC

.

(4)

Where ‘≈’

symbolizes

that least-squares fit. Today, you might do a web

search and you would

learn to solve for C

using theMoore-Penrose pseudo-inverse = A+ =

(ATA)−1AT ,

so that

(5)

That would give

you C as a

numeric 3-vector, and if we

define ...C = A+L = (ATA)−1AT L . (6) Fundamental Metamer of L = L* = The

least-squares fit to L,

(7)

|

|

| The Fundamental

Metamer,

continued. |

| then, L* = AC .

(8)

A numerical value

for

3-vector C

gives a numerical

vector for L*,

but no new

insight. The value of C—the

coefficients for a linear combination of CMFs—depends

on which CMFs you

are using. Nothing is invariant. Deriving everything

from a blank page,

Cohen in effect combined Eq. (6) and Eq. (8) to find

thatL* = [A(ATA)−1AT]

L .

(9)

Then L is the original

light, L* is

its fundamental metamer, and

the expression in square brackets is projection matrix

R:R = A(ATA)−1AT

.

(10)

Cohen noticed

that R

is invariant, and that led to other interesting ideas.My algebra looks different from Cohen’s. But the invariant projection matrix R remains important. R is invariant in the strongest possible sense. If A is any set of equivalent CMFs, like any of the sets in Fig. 1, then R is the same large array of numbers, not scaled or transformed in any way. For proof, see Q&A. |

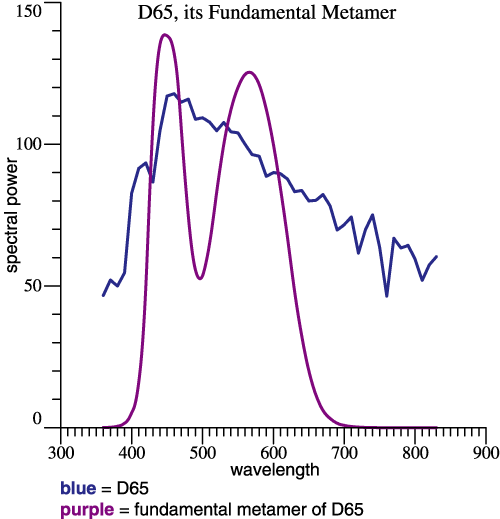

| Fundamental

Metamer Example. |

|

At left for example, the blue

curve shows L

= D65. The

purple curve is L*,

the

fundamental metamer of D65. The two curves are

metamers in the ordinary

sense. L*, a

linear

combination of CMFs, is found by: L*

= RL .

(11)

Eqs. (8) and (11) have '=' and not '≈' because L* by definition is the least-squares approximation. So that’s Jozef Cohen’s

Highly Original Contribution. Now a few screens above,

We were talking about vectors... |

| Cohen

References: Cohen, Jozef, “Dependency of the spectral reflectance curves of the Munsell color chips,” Psychonom. Sci. 1, 369-370 (1964). Cohen, Jozef, Visual Color and Color Mixture: The Fundamental Color Space, University of Illinois Press, Champaign, Illinois, 2001, 248 pp. <Book remains in print, as far as I know.> |

|

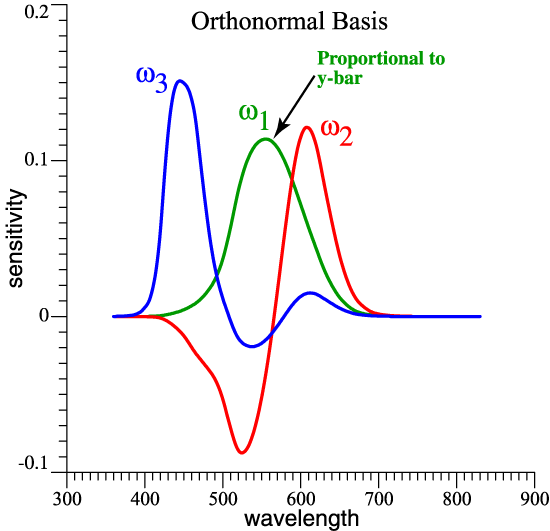

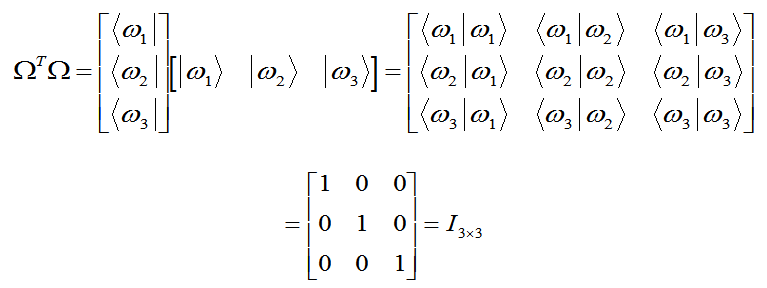

| Orthonormal

Opponent Color Matching

Functions. |

| Orthonormal

Opponent Color Matching

Functions: Description. |

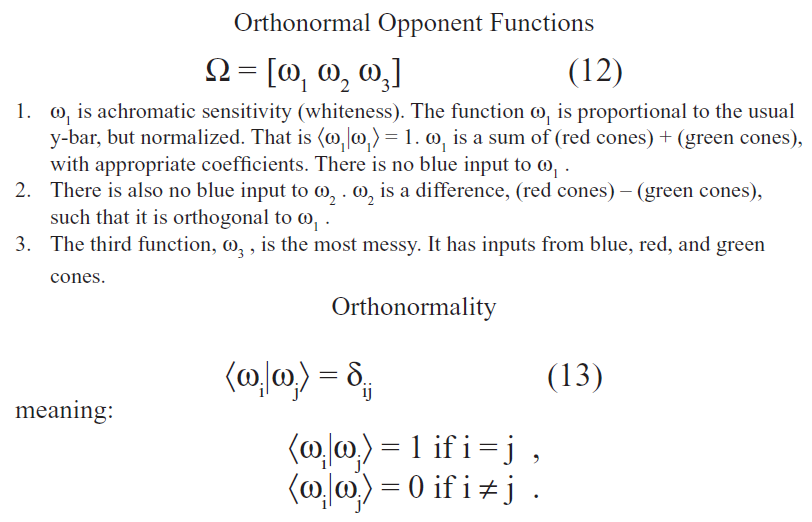

| Bra

and

Ket Notation |

| Working

Class Summary |

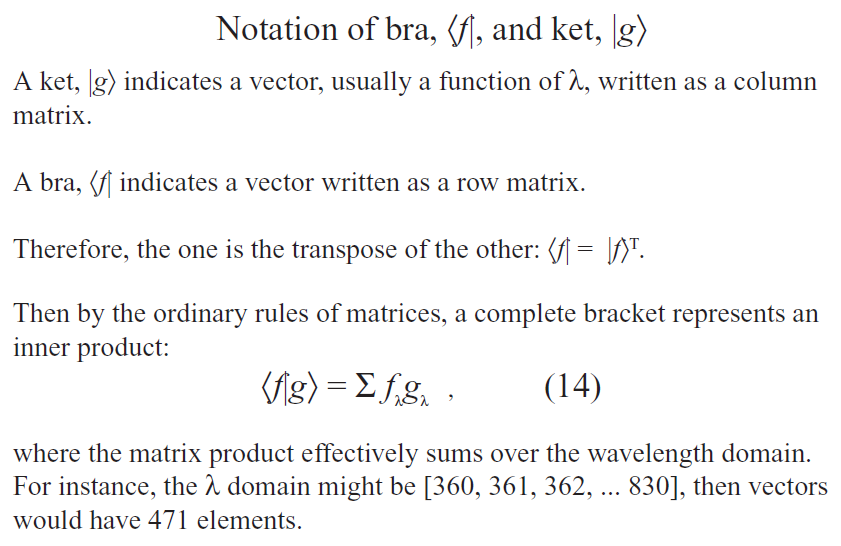

| Although many clever

ideas (from

Cohen, Guth, Thornton, Buchsbaum, etc.) guided its

development, in the

end the orthonormal basis is not radically new: |

|

|

|

| Practicality |

|

A new orthonormal basis could be created for any standard observer or camera. If it will suffice to have orthonormal opponent functions based on the 1931 2° Observer, then tabulated data are available at http://www.jimworthey.com/orthobasis.txt . |

11etc, etc. Recall that graphs are above. Nominal time =

2:08 pm, Pacific

Time

|

| Fun with Matrices |

|

(15) |

| Alternate Practical

Presentation <Bonus

item,

not in handout!> |

| If you already

have

the functions x-bar, y-bar, and z-bar handy in your

computer, then you

can generate the orthonormal basis by adding and

subtracting those

vectors. It's one matrix multiplication. If T is the 3×3 matrix below, and X is the array [x-bar y-bar z-bar] , then Ω

= X*T

.

What's

below

is program output, where OB = OrthoBasis = Ω . The

output

explains a derivation for T and what's calculated

numerically. |

| Abbreviate

OB =

OrthoBasis = Omega, and X = [x y z]-bar We seek xfmn T such that OB = X*T. So, solve that equation for T: OB = X*T Multiply both sides by OB', then apply orthonormality: OB' * OB = OB' * X * T I = OB' * X * T T = inv(OB' * X) <transformation matrix that we need> T = inv(OB'*X) = { [ 0.0000000000 , 0.1875633629 , 0.0306246445 ] [ 0.1138107224 , -0.1332892909 , -0.0312159276 ] [ 0.0000000000 , -0.0397124415 , 0.0793190892 ] } Now compute OB = X*T, and test the result: OB'*OB = { [ 1.0000000000 , 0.0000000000 , 0.0000000000 ] [ 0.0000000000 , 1.0000000000 , -0.0000000000 ] [ 0.0000000000 , -0.0000000000 , 1.0000000000 ] } Carrying plenty of digits gives a convincing identity matrix in the check calculation. |

| Graphing

a Vector |

| 5

Vectors |

| 5

Vectors Added |

| Wavelengths

of Strong Action |

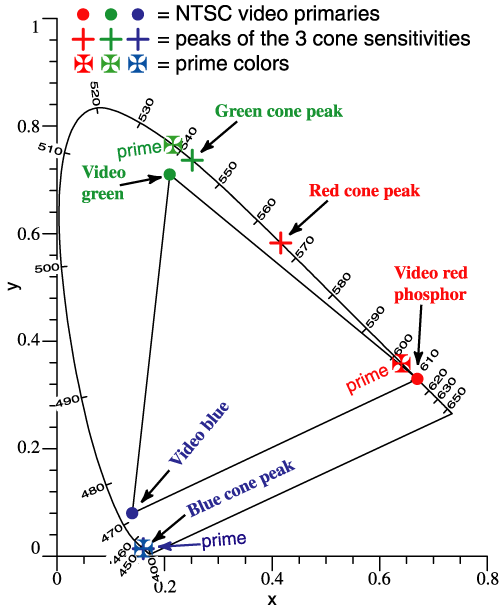

| A color mixture is predicted by adding vectors, but vectors [X Y Z]T are traditionally not graphed. Now suppose that it is 1950 and we are trying to invent color television. It will be helpful if the phosphor colors correspond to long vectors in well-separated directions. Indeed, the NTSC phosphors are approximately at Thornton’s Prime Colors, 603, 538, 446 nm. | |||||||||||||||||||||||||||||

|

|

||||||||||||||||||||||||||||

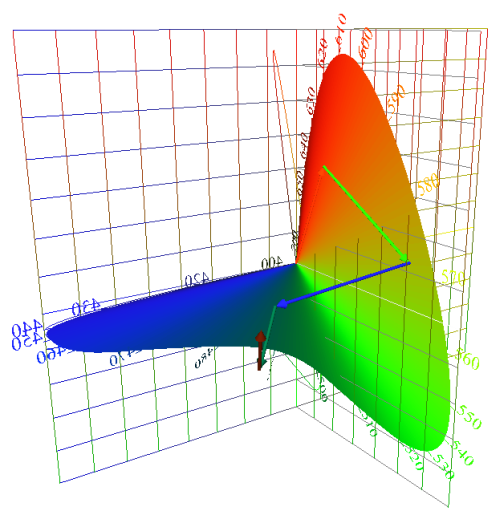

| Locus

of Unit Monochromats |

| Locus

of Unit Monochromats (continued) |

| 5

Vectors Added (revisited) |

|

The locus of unit

monochromats can be included to give a frame of

reference to a vector

sum or other interesting diagram. |

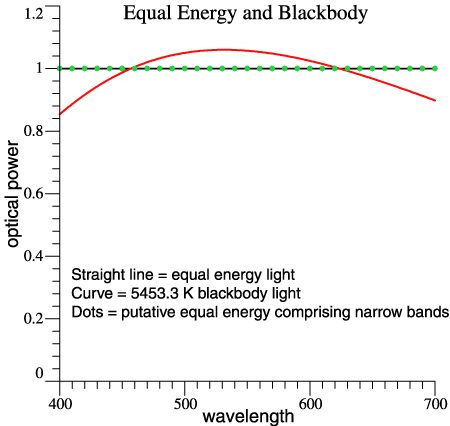

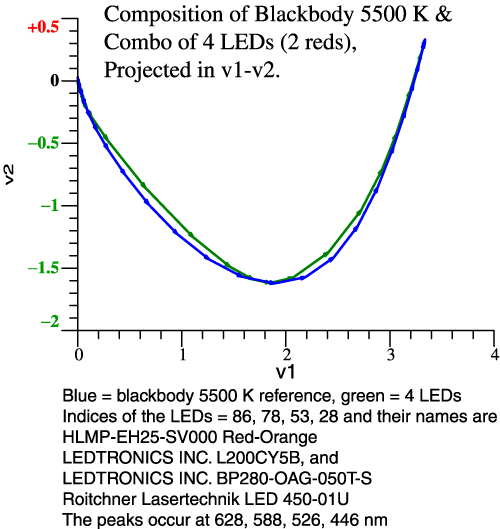

| Composition

of a White Light |

|

The so-called "equal energy light" is one that has constant power per unit wavelength across the spectrum, indicated by a solid black line in the figure at the right. The straight-line spectrum makes a simple discussion and is similar to a more realistic light, 5453 K blackbody (or 5500 if you like). For the next step, let’s assume an equal-energy light that packs all of its power at the 10-nm points, 400, 410, and so forth, as indicated by the green dots. |

|

| Composition

of a White Light (continued) |

| Composition

of a White Light (continued) |

|

The swing toward red almost cancels the swing towards green. The greenish and reddish vectors are all present in the light, but red cancels green, approximately. I have explained the same idea in the past without vectors. |

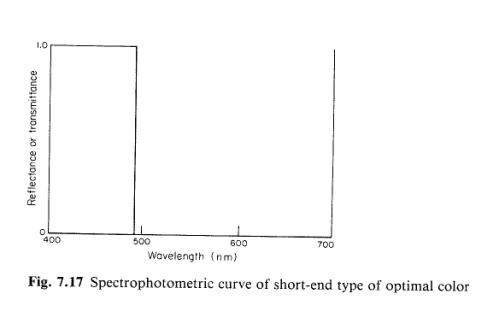

| Now

let

this white light be shined on colored objects, and

think of what

an object color does. A red object reflects a range of

the reddest

vectors, while absorbing green and blue. The light

looks white, but

it must have red in it to reveal the red object as

different from

gray or green. The most saturated colors will be those that reflect one or two segments of the spectrum and absorb the rest. You can think of those colors as "snipping out" a segment of the chain of vectors that compose the white light. Is it a new idea to snip out part of the spectrum? No! The idea is called limit colors and is associated with MacAdam, Schrödinger and Brill, among others. On the next page is an illustration from MacAdam’s book, showing the possible ways to snip out parts of the spectrum. I won’t discuss limit colors at length, but they are a well-known idea. |

|

| Objects

that Snip out Parts of a White Light |

Figures

from D.

L. MacAdam, Color

Measurement: Theme and Variations,

Springer, Berlin, 1981. |

|

|

Observation: Many discussions

of limit

colors focus on a color gamut. Here we look at a

more basic step,

the limit color's interaction with the components of

a white light. |

|

| One

Example: a Short-end Optimal Color |

| Why

So Many 3-Dimensional Drawings? |

Let’s look at another use of the orthonormal opponent functions.

| Minimizing

Correlation |

|

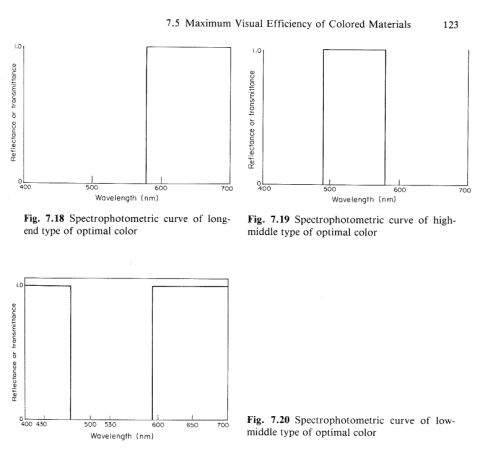

The orthonormal basis and Cohen’s space are a multi-purpose system. One benefit is that v1, v2, v3 are less correlated than other sets of tristimulus values.

|

|

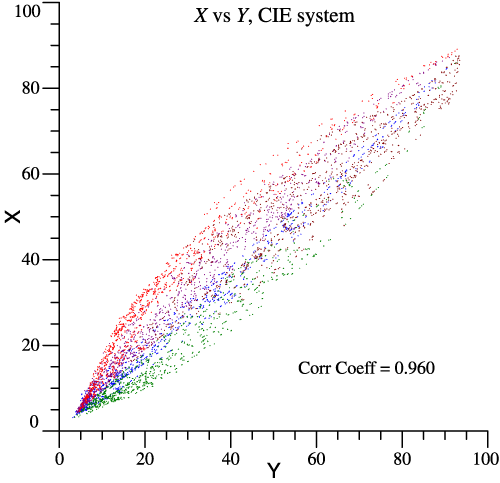

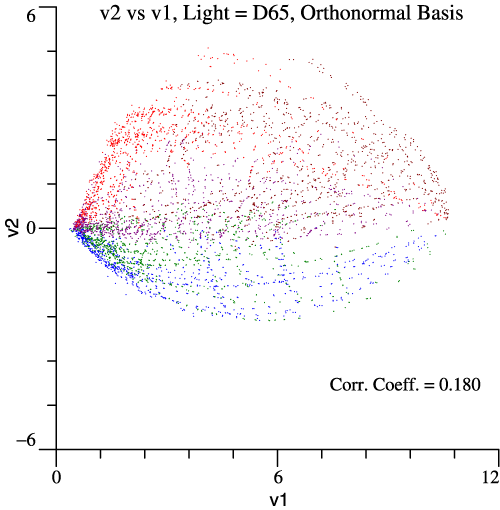

| Spectral

reflectances

are available for a set of 5572 paint chips: Antonio

Garcia-Beltran, Juan L. Nieves, Javier

Hernandez-Andres, Javier

Romero, "Linear Bases for Spectral

Reflectance Functions of Acrylic

Paints," Color

Res. Appl. 23(1):39-45,

February 1998. Professor Garcia kindly sent me the raw

data. To make realistic visual stimuli, let the paint chips be illuminated by D65. Then one stimulus can be plotted against another to see if they are correlated or independent. Garcia et al. put the chips in color groups, so I’ve colored the dots accordingly. The graph above shows that red and green cone stimuli are highly correlated, correlation coefficient = 0.976. X vs Y in the legacy system is a little better, while the opponent stimuli v1 and v2 are most independent, Correlation Coefficient = 0.180 . |

|

|

|

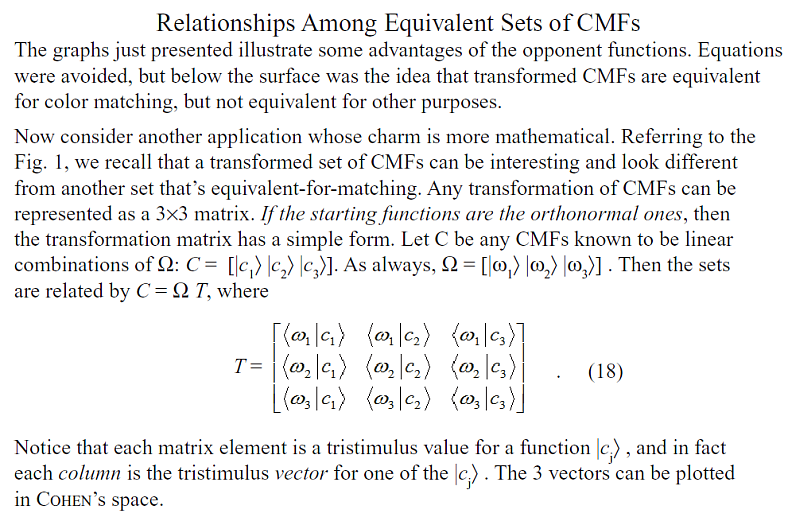

| Relationships

Among Equivalent Sets of CMFs |

| CMFs

Graphed as if they Were Lights |

| Opponent

Colors and Information Transmission |

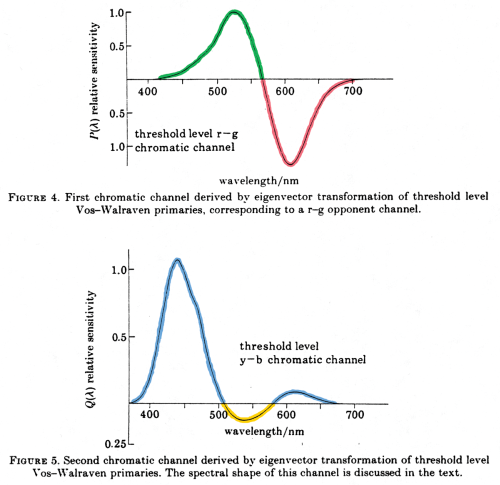

| Above I’ve shown the

benefit of an opponent system for information

transmission (image

compression) in an empirical way, by starting with

thousands of paint

chips. Buchsbaum and Gottschalk proceeded differently,

deriving an

opponent-color system to optimize information

transmission. They

started with cone functions that are similar to the

ones I use, and got

a result like my opponent model. That is, they got an

achromatic

function (not shown) similar to y-bar, and the

red-green and

blue-yellow functions shown at right. The achromatic

and red-green

functions have minimal blue input, and the blue-yellow

function crosses

the abscissa twice and looks very much like function

that I have been

using. |

|

| This equation,

scanned right out

of the original article shows how their first two

functions have little

blue input, etc: |

|

|

|

| Their functions A, P, Q are

orthogonal, but not

normalized. Long story short, Buchsbaum and Gottschalk started with cone functions and derived opponent functions. They had a specific goal of optimum information transmission. I derived opponent functions in a simple way and discovered their connection to Cohen’s work. In the end, the sets of functions are extremely similar, confirming that the opponent basis is appropriate for image compression and propagation-of-errors. All orthonormal sets based on the same cones lead to the same Locus of Unit Monochromats, so in that sense Buchsbaum’s set cannot be wrong. [Gershon Buchsbaum and A. Gottschalk, “Trichromacy, opponent colours coding and optimum colour information transmission in the retina,” Proc. R. Soc. Lond. B 220, 89-113 (1983).] |

|

| Value

of Cohen's Space and Orthonormal Basis |

Benefits

of the orthonormal basis can be listed:

|

| The

last list

item is

most important and

echoes Jozef Cohen’s ideas. If you have two lights

(2 SPDs) as stimuli

to vision, they

have an intrinsic vector relationship. Each

light has an

invariant fundamental metamer, which is its

projection into the vector

space of the CMFs. Vectors V obtained using Ω have

the same

amplitudes and vector relationships as fundamental

metamers. Functions

x-bar and y-bar are intrinsically 40.5° apart, but ω1,

ω2, ω3

are 90°

apart, so v1, v2, v3

are more appropriate axes than X, Y, Z. |

| End

Digression,

return to Vector Composition of Lights |

| Some

Lights Have Less Red and

Less Green |

|

A

white light has net redness or greenness that is small

or zero. The

same white point can be reached by different lights in

different

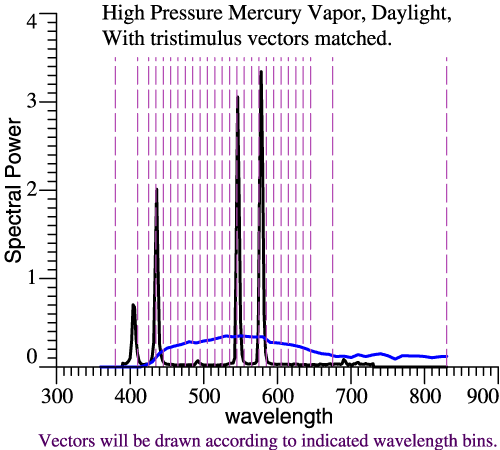

ways. SPDs of 2 lights are plotted at right. The black line is High Pressure Mercury Vapor light, while the blue is JMW Daylight, adjusted to have the same tristimulus vector. (Yes, that means they are matched for illuminance and chromaticity.) The wavelength domain is chopped according to the dashed vertical lines. The wavelength bands are 10 nm, except at the ends of the spectrum, with most bands centered at multiples of 10 nm. If one light then multiplies the columns of Ω, those products could be graphed as a distorted LUM, but we skip that step. |

|

| Instead, the color composition of each light will be graphed as a chain of vectors. Nominal time = 2:42 pm | |

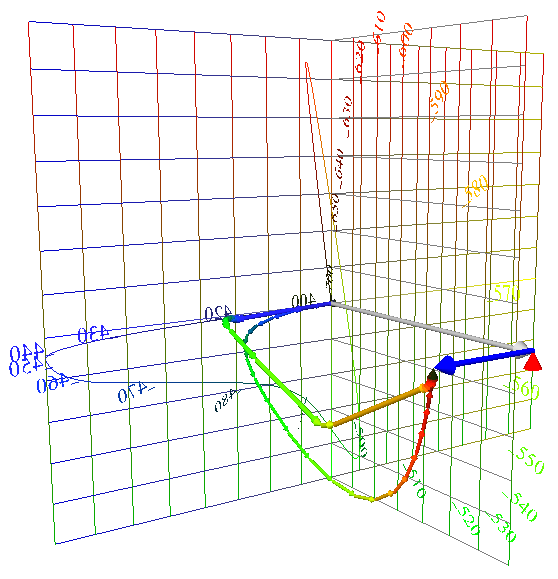

| Comparing

Color Composition of Lights |

| Now the same two lights are

compared in their vector composition. The smooth chain

of thin arrows

shows the composition of daylight. Slightly thicker

arrows show the

mercury light. The mercury light radiates most of its power in a few narrow bands, leading to a few long arrows that leap toward the final white point. Compared to “natural daylight,” the mercury light makes a smaller swing towards green, and a smaller swing back towards red. Such a light would leave the red pepper starved for red light with which to express its redness.  |

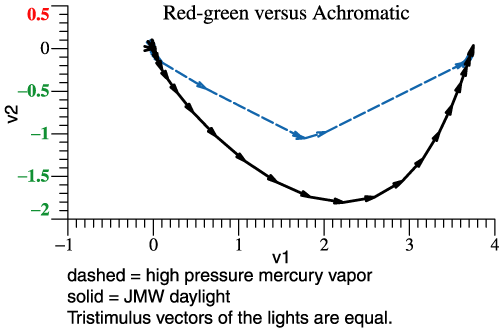

|

| Above two lights are

compared by the narrow-band components of their

tristimulus vectors. At

right the same information is shown, but projected

into the v1-v2

plane. The loss of

red-green contrast is the main issue with lights of

“poor color

rendering,” and that shows up in this flat graph. If

you were really

designing lights, you might use the v1-v2

projection as a main tool. You might want to add

wavelength labels to

the vectors. (In the VRML picture, λ info is indicated

by coloration.

In the picture at right, vectors that are

approximately parallel

pertain to the same band.) |

|

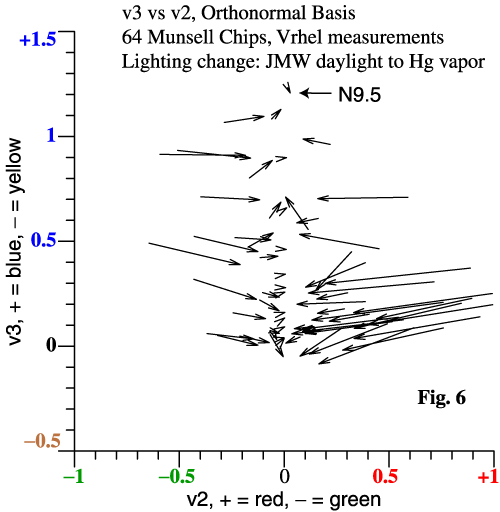

| Other interesting data can be

plotted in Cohen’s space. Suppose that the 64 Munsell

chips from

Vrhel et al. are illuminated first by daylight and

then by the mercury

light. Since the mercury light lacks red and green, we

expect it to

create a general loss of red-green contrast among the

64 chips. The graph at right is a projection into the v2-v3 plane. Each arrow tail is the tristimulus vector of a paint chip under daylight. The arrowhead is the same chip under the mercury light. The lightest neutral paper is N9.5, and is a proxy for the lights. Notice that 3-vectors projected into a plane still have the properties of vectors in the 2D plane. As expected, red and green paint chips suffer a tremendous crash towards neutral. |

|

| Actual neutral

papers appear as

arrows of zero length. For an alternate presentation about comparing lights, please see “How White Light Works,” and the related graphical material. |

|

| Cameras

and the "Luther Criterion" |

|

The Luther Criterion,

also known as the Maxwell-Ives Criterion:

For

color fidelity, a camera’s spectral sensitivities

must be

linear combinations of those for the eye. |

|

|

|

| Bold

Steps for Camera Analysis |

| Compare

Camera LUM to that of Human |

| The

Fit First Method |

| Conceptually, the

camera’s LUM

(spheres) is more fundamental than the fit to the

human LUM

(arrowheads). The trick of the Fit First Method is

to find the best fit

first, then find the LUM from that. Here is the computer code: Rcam

= RCohen(rgbSens) # 1

CamTemp = Rcam*OrthoBasis # 2 GramSchmidt(CamTemp, CamOmega) # 3 The camera’s 3 λ sensitivities are stored as the columns of array rgbSens . Because of the invariance of projection matrix R, it doesn’t matter how the functions are normalized, or whether they are actually in sequence r, g, b. Statement 1 finds Rcam, the projection matrix R for the camera. RCohen() is a small function, but conceptually, RCohen(A) =

A*inv(A’*A)*A’ . (7)

In other words, step 1 applies Cohen’s formula for the projection matrix. Then Rcam is the projection matrix for the camera. In step 2, the columns of OrthoBasis are the human orthonormal basis, Ω . The matrix product Rcam*OrthoBasis finds the projection of the human basis into the vector space of the camera. But, the wording about projection is another way of saying that step 2 finds the best fit to each ωi by a linear combination of the camera functions. So, step 2 is the “fit” step. (More programming specifics.) |

|

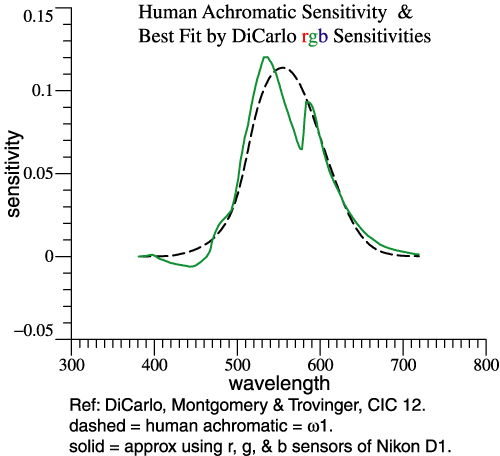

| Step 2 does 3 fits at

once, but let's

look at just one. At right, the dashed curve is ω1,

the human achromatic function. The camera in question

happens to be a

Nikon D1. The solid curve is a linear combination of

that camera’s r,

g, and b functions that is the least-squares best fit

to ω1.

There would be other ways to solve the curve-fitting

problem, but

projection matrix R

is

convenient. A best fit is found for each ωj

separately. The resulting re-mixed camera functions

are not an

orthonormal set. |

|

| Step 3,

Orthonormalize the Re-mixed Camera Functions |

|

| Since

the

re-mixed camera functions are computed separately,

they are not

orthonormal and would not combine to map out a true

Locus of Unit

Monochromats. But they mimic Ω

and are in

the right sequence. We need

to make them orthonormal, which is what the

Gram-Schmidt method does,

Step 3. |

|

|

|

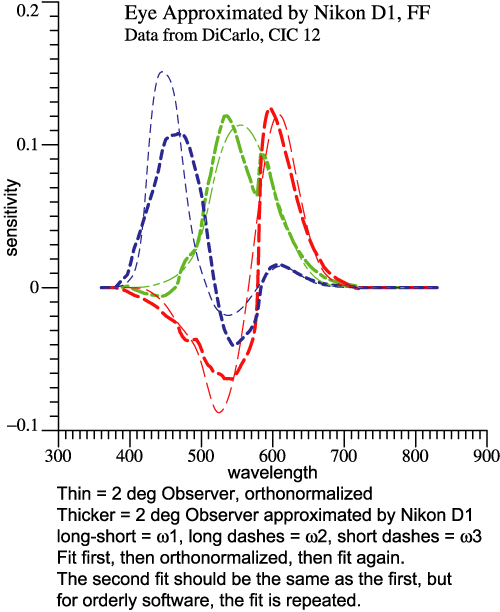

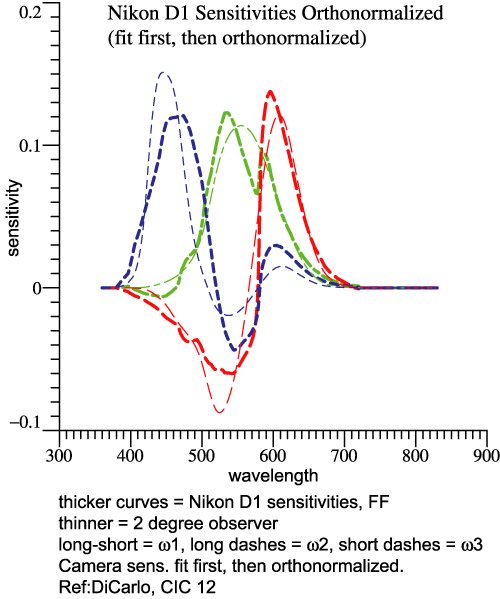

|

The

two sets of graphs above look similar. But the

one on the left shows the set of “fit” functions. The

one on the

right shows the orthonormal basis of the Nikon D1. The

thinner curves

pertain to the 2°

observer, the thicker ones to the camera. Why Does

it

Matter?

When

you have the orthonormal basis, for the eye or for a

camera, you

can do many things with it.

Combining the 3 functions generates the Locus of Unit

Monochromats.

The orthonormal property leads to some simple

derivations. On the Q&A

page, see "Can

we have fun with orthonormal functions?"The Noise

Issue

To extract

color

information, some subtraction must be done. The

signals subtract but

the noise adds (in quadrature). The noise

discussion becomes more

concrete when one can say exactly what subtraction

will be done. The

camera’s orthonormal basis is a natural to be the

canonical transformed

sensitivity. Orthogonality means “no redundancy”

and normalization

standardizes the amplitude. There’s a numerical

noise example worked

out in the CIC 14 article. For now, the point is

that expressing camera

sensitivities as an orthonormal basis is a giant

step towards dealing

with noise.

|

|

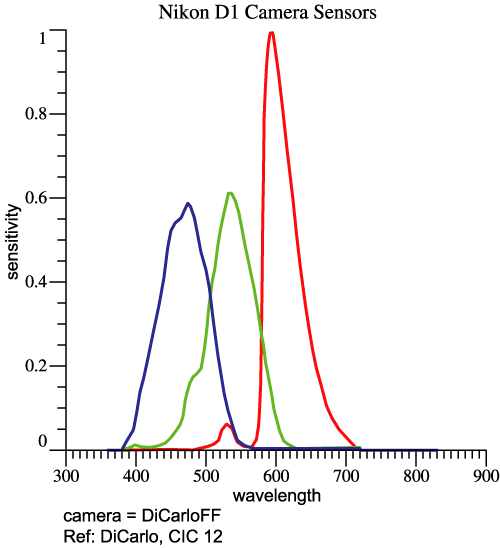

| Camera

Example, Nikon D1 |

| The last 4 graphs above

pertained

to the Nikon D1, based on data from CIC 12. At right

are the camera’s

red,

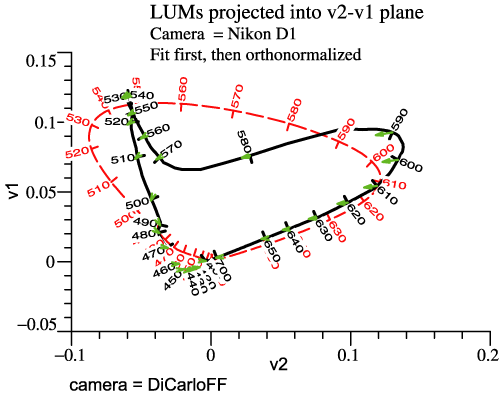

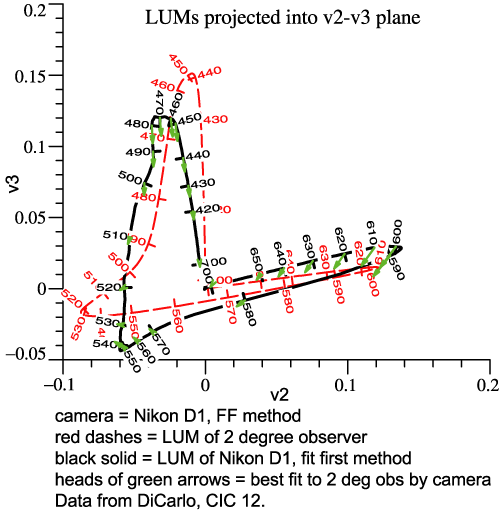

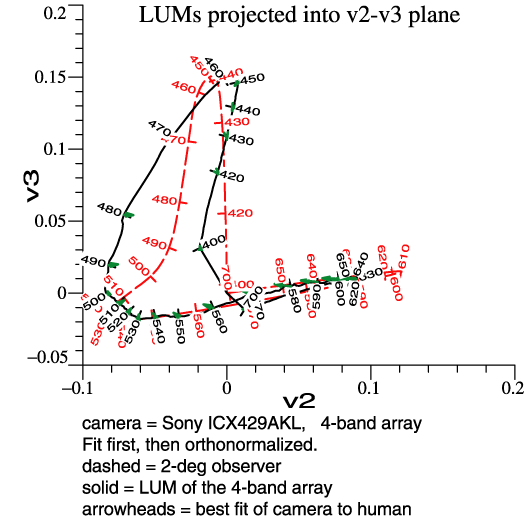

green, and blue sensitivities. The camera’s LUM can be compared to the eye’s. Rather than another perspective picture, we now view the LUMs in orthographic projection (2 graphs below). The dashed curves are the human locus. The solid curves are the camera’s locus, while the tips of the small green arrows are points on the best-fit sensitivity function. |

|

|

|

| Now

you may say “These curves mean nothing to me!” That

may be at

first, but the graphs contain a complete description

of the camera

sensor, with no hidden assumptions, and no

information discarded. Finding

Some Meaning in the Camera's LUM

Consider the left-hand graph, “LUMs projected

into

v2-v1 plane.” Only the red and green receptors

contribute to the human

LUM in this view, and v1 is the achromatic dimension

for human, based

on good old y-bar. In this plane at least, the

particular camera tends

to confuse wavelengths in the interval 510 to 560

nm, which are nicely

spread out as stimuli for human. Yellows, say 560 to

580 nm, have lower

whiteness than they would for human. The camera has

other differences

from human that may be harder to verbalize. To the

extent that finished

photos look wrong, one could revisit these graphs

for insight.More

Examples

Five detailed examples were prepared in 2006,

and they

are linked from the further

examples page. For

instance, Quan’s optimal sensor set indeed looks

good in any of

the graphical comparisons to 2°

observer. (See http://www.cis.rit.edu/mcsl/research/PDFs/Quan.pdf

.) |

|

| Something

Completely

Different: a 4-band Array Nominal

time: 3:08 pm

|

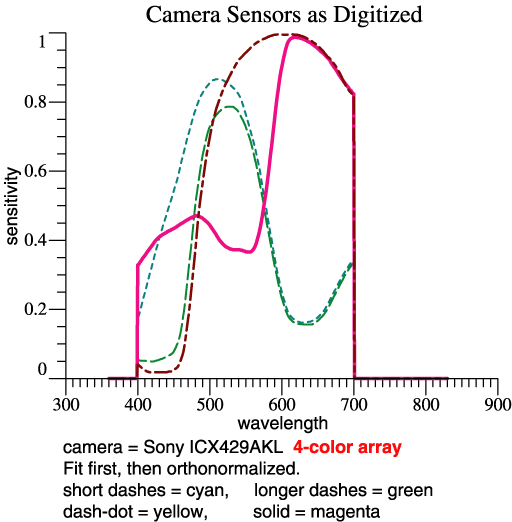

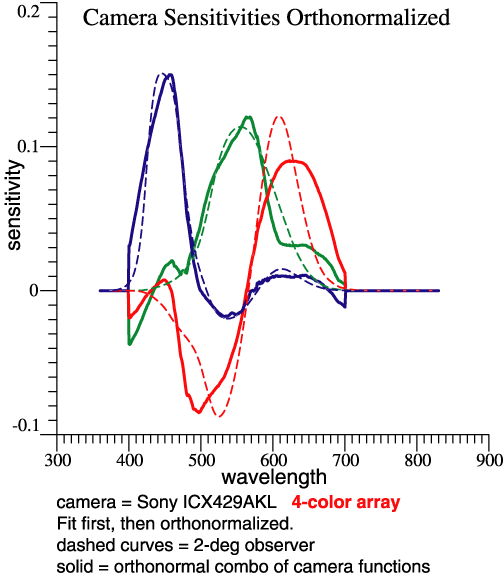

| Sony publishes a

specification for

a 4-band sensor array, the ICX429AKL. I’m not sure

of the intended

application, but it could potentially be applied in

a normal

trichromatic camera. The Fit First Method readily

fits the 4 sensors to

the 3-function orthonormal basis. |

|

| The four sensitivities are seen

at right. The key steps look the same: Rcam

=

RCohen(rgbSens)

. Recall that

the

projection matrix Rcam is a

big square matrix of dimension NxN, where N is the

number of

wavelengths, which might be 471. The 4th sensor adds

a column to the

array rgbSens,

but does not change the dimensions of the result Rcam. After

the key "fit first" steps, I did have to re-think

some auxiliary

calculations because of the 4-column sensor matrix.CamTemp = Rcam*OrthoBasis GramSchmidt(CamTemp, CamOmega) |

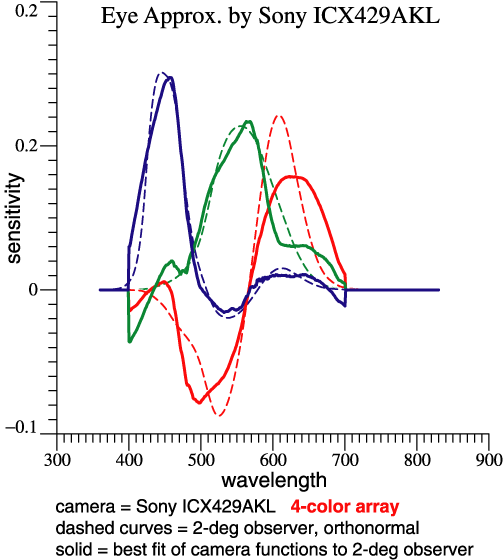

|

| The camera's

orthonormal basis

Rcam comprises 3 vectors that are linear

combinations of the 4 vectors

in rgbSens. I wanted to find the 4x3

transformation matrix relating the

one to the other, in order to stimulate thinking

about noise. The

program output itself explains the method as

follows: Similar

to Eqs. 15-18

in CIC 14 paper,

Transform from sensors to CamOmega: We want to solve CamOmega = rgbSens * Y , where Y is coeffs for 3 lin. combs. MPP = inv(rgbSens'*rgbSens) * rgbSens' Y = MPP*CamOmega = 0.24845 0.13737 0.35971 -0.34663 -0.43708 -0.45585 -0.22433 -0.088434 -0.033503 0.26839 0.22522 0.055984 Column amplitudes = vector lgth of each column = 0.55158 0.51812 0.58433 The columns of rgbSens actually contain the 4 sensitivities, cyan, green, yellow, magenta. MPP is the Moore-Penrose Pseudoinverse. (See Wikipedia and pp. 9-10 in "notes.") Keeping in mind that the sensitivities are all >= 0, matrix Y gives some idea how much subtraction is done to produce the sensor chip’s orthonormal basis. That’s a step toward thinking about noise. Below are the 3 orthonormal functions, and also the 3 best-fit functions made from the 4 camera sensitivities. The only source of “noise” is the errors that I introduced while converting graphs to numbers. It becomes more visible here, after subtractions. |

|

|

|

|

Some noise also shows up in the projections of the LUM, below. It would appear that color fidelity is not good; reds and oranges may lose some redness. |

|

|

|

| Combining

LEDs to Make a White Light? |

| In

the

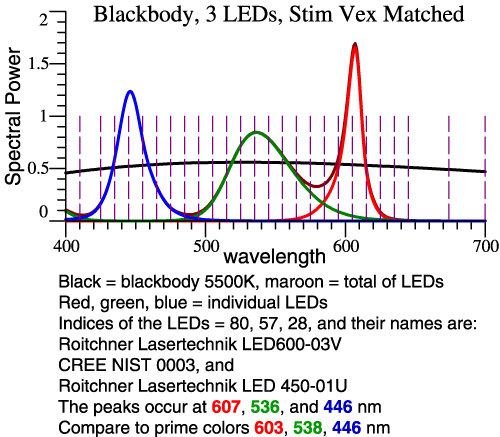

1970s, William A. Thornton asked an interesting

question: If you

would make a white light from 3 narrow bands, how

would the choice of

wavelengths affect vision of object colors under the

light? His

research led to the Prime Colors, a set of

wavelengths that reveal

colors well. From that start, he invented 3-band

lamps and was named

Inventor of the Year in 1979. He continued his

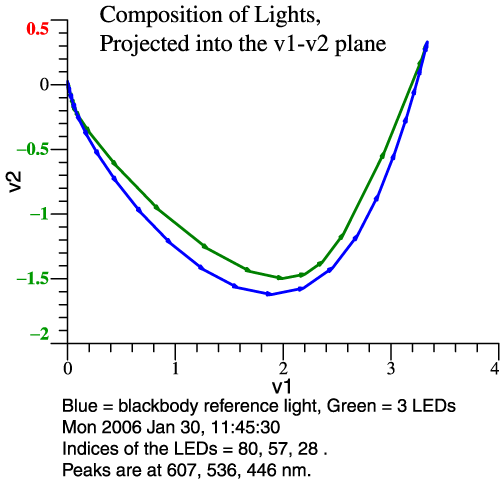

research and made the

definition of prime colors more precise. Problem Statement: For the 2° Observer, Thornton’s Prime Colors are 603, 538, 446 nm. [See CIC 6, and Michael H. Brill and James A. Worthey, "Color Matching Functions When One Primary Wavelength is Changed," Color Research and Application, 32(1):22-24 (2007). Also see Wavelengths of Strong Action, above.] If you would make a light with 3 narrow bands at those wavelengths, the light would tend to enhance red-green contrasts, making some colors more vivid, though it would do a bad job with saturated red objects. You might think then that a white light could be made from 3 LEDs whose SPDs peak at those wavelengths. This idea falls short because LEDs are not narrow-band lights. Our task then is to see what happens when real LEDs are combined, and design a good combination by speedy trial-and-error. We'll see two graphs per example: the LED spectra and their sum, and then the vectorial composition of the LED light in comparison to 5500 K blackbody. Clicking either image gives more detailed information. Example 1: Let LED

Peaks ≈ Prime Colors

The reference white is 5500 Kelvin blackbody.

From 119

types measured by Irena Fryc, 3 LEDs are chosen by

their peak

wavelengths, as shown at left. Then we see at right

that the blackbody

(blue line) makes a bigger swing to green and back.

This LED combo will

dull most reds and greens. |

|

|

|

|

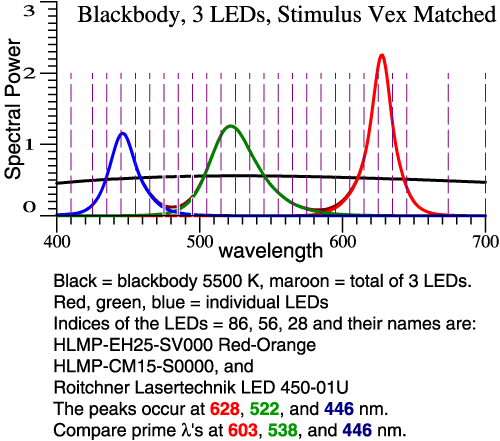

Example

2: Greener

Green and Redder Red

To increase the swing towards green and back,

we let

the green be greener (shorter λ)

and the red be

redder (longer λ).

In all

cases, the LED amplitudes are adjusted so the total

tristimulus vector

matches the blackbody. |

|

|

|

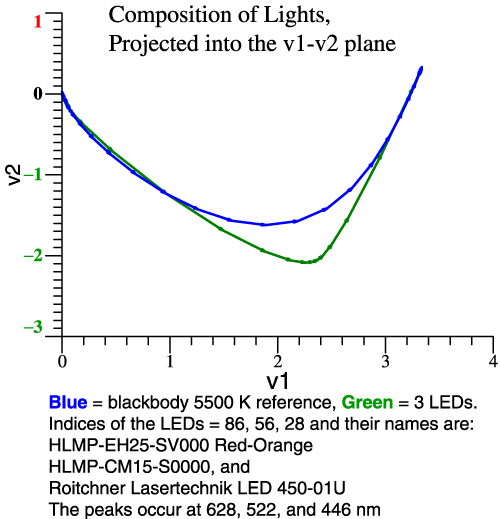

| Still

on example 2, we can see

from the vector composition (right-hand graph) that

red-green contrast

will be good, but some colors may be particularly

distorted. Clicking the

left-hand graph confirms that idea in the

color shifts of the 64

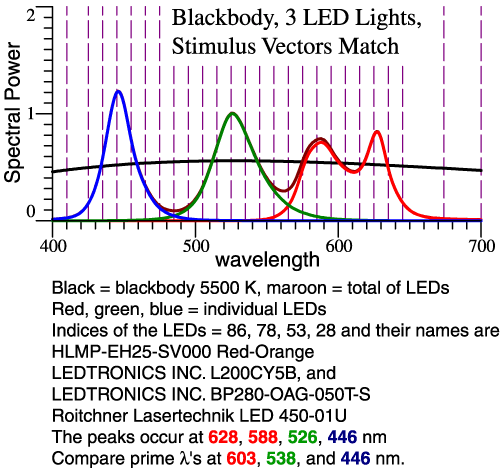

Munsell papers. Example 3: Broaden

the Red Peak

The problem in example 2 is known to some

lighting

experts.

The light needs to have a broader range of reds. The

remedy is to use 2

red LEDs. For simplicity, the proportion of the 2

reds is fixed, not

adjustable. |

|

|

|

| Think

of those green and red limit colors. Some of those

colors will still be

dulled, but

we are tracking the blackbody pretty close. Further

tweaking is

possible. |

|

| Vectorial

Color |

| Invariants |

| Food for Thought ... |

| The

End |

|

Thank you very much for signing up and for your attention.

Please feel free to contact me at any time. I am always eager to discuss lighting, color, cameras etc.

Jim Worthey

|

| Special

Credit |

|

William

A. Thornton Michael H.

Brill (But

MacAdam gets a demerit for disparaging

Cohen's work.) Calculations

were

done with O-matrix software. |

William

A. Thornton

1923-2006 |

Jozef B. Cohen

1921-1995 |

Bonus

Picture: Jim Worthey, Jozef

Cohen, Nick Worthey, circa 1993 |

||

| Lighting

for a Copy Machine?? |

| So,

We

Know How to Design a Light. Now Let the Camera Also be a Variable. |

| Let the lights be the last pair demonstrated above. The reference white is 5500 K blackbody. The 2 red LEDs are in fixed proportion, but the R, G, and B LEDs are adjusted to match the blackbody. The adjustment of R, G, B is done for human, and then separately for the camera. Using the Fit First method, it is possible to graph the human and camera sensitivities together, and then the compositions of the lights as seen by human and by camera. |

| Bonus

Example: Multi-primary System (N > 3) |

| Stop |

| Scroll

No Farther |

|

Material Below Addresses Obscure Questions |

|

|

|

|