Usage note: To see some preset views, click 'view'

arrows below the 3D picture.

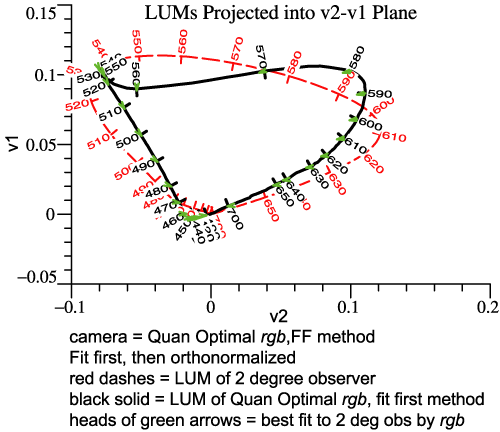

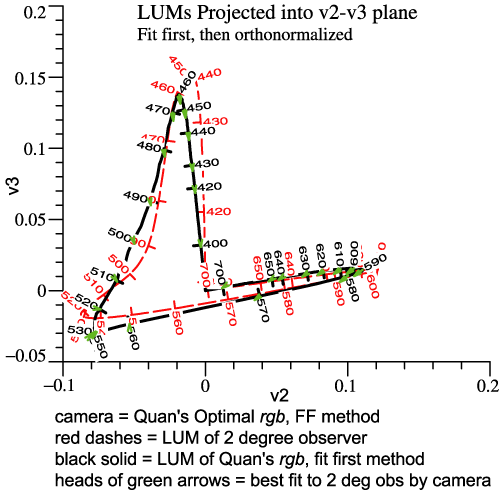

In the virtual reality picture above, the Locus of Unit Monochromats

for the human 2°

observer is drawn in the usual way, as the edge of the multicolored

surface. The LUM of the camera is indicated by spheres. The short

arrows indicate the transition from the camera's LUM itself to the best

fit, so the arrow tips are the best fit curve. If the spheres lay right

along the human LUM, that would mean that the camera fulfills

Maxwell-Ives. In that case, the camera's LUM would be the best fit, and the arrows

would have zero length.

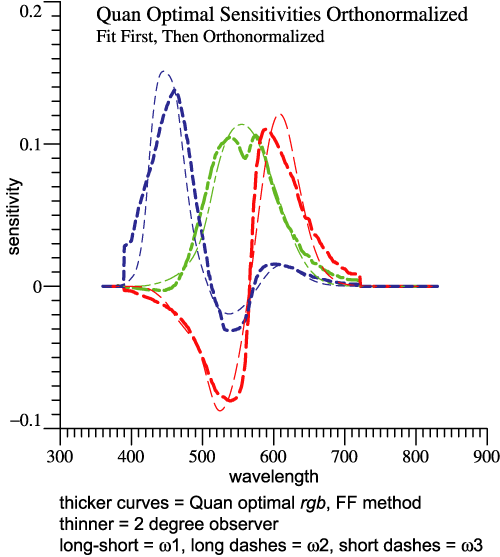

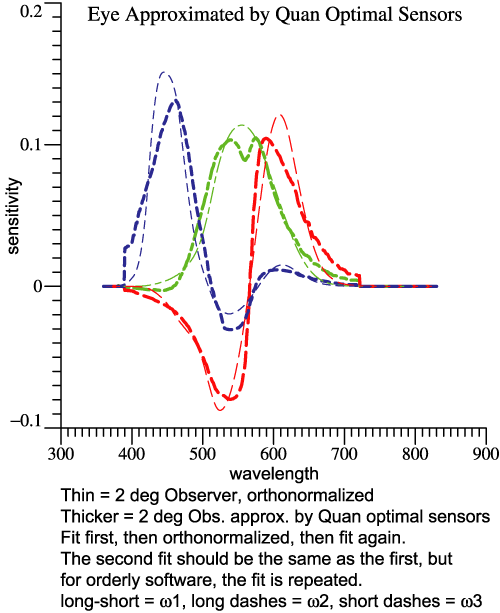

The camera LUM is derived by the "fit first" steps:

- Find a best fit to human LUM by a linear combination of the

camera's sensor functions. The best fit functions will look more or

less like achromatic, red-green, blue-yellow, but will not be an

orthnormal set.

- Preserving that sequence, orthonormalize the functions by

the Gram-Schmidt method .

- Combining those 3 functions into a 3-dimensional graph

gives the camera LUM, indicated by spheres.

- Yes, the best fit found as an intermediate step is the same

best fit indicated by the tips of the short arrows.

The steps just specified all result in adding and subtracting of the

camera sensor functions. We can then ask if the red-green function is

really made by subtracting the camera's green function from its red

function, and so forth. Such questions are answered by the

transformation matrix relating the camera's orthonormal basis to the

sensor functions. Let the camera sensors be

the columns of array rgbSens, and CamOmega be the camera's orthonormal

basis. Then

CamOmega = rgbSens*Y, where

Y = inv(CamOmega'*rgbSens) .

The tiny apostrophe, ', denotes matrix transpose. The transform Y can

be found for any camera, and indeed for the eye itself.

2°

observer himself

|

Nikon

D1,

DiCarlo's data

|

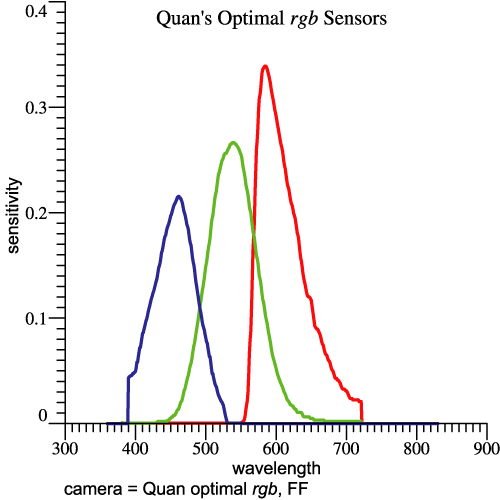

Quan's

Optimal Sensors

|

Y =

inv(OrthoBasis'*rgbbar) =

0.0725

|

0.267 |

0.0376 |

| 0.0447 |

-0.310 |

-0.0543 |

0

|

0

|

0.138 |

|

Y = inv(CamOmega'*rgbSens)

=

0.0614

|

0.151

|

0.0375

|

0.198

|

-0.107 |

-0.0865

|

-0.0221

|

-0.0345

|

0.227

|

|

Y = inv(CamOmega'*rgbSens)

=

0.164

|

0.413 |

0.0748

|

0.393

|

-0.303 |

-0.118 |

-0.0221

|

-0.0680

|

0.648

|

|

Column amplitudes =

0.0852

0.409 0.153

|

Column amplitudes =

0.208 0.189

0.246

|

Column amplitudes =

0.426

0.516 0.663

|

|